AI company responds after its ad was banned by officials over disturbing claims

The software could be manipulated to do far more than it was supposed to

One company has been forced to speak out in the aftermath of seeming to suggest that its software could be used to remove women's clothes, which let’s be honest, isn’t exactly a good look in 2026.

Many clocked that the evolution of generative AI could have some serious drawbacks, and you only have to look at how far videos of Will Smith eating spaghetti have come to see it's getting increasingly hard to distinguish what's real and what's fake.

Growing from the notion of deepfakes, it all seemed like a bit of a laugh when people were creating AI videos of Donald Trump sucking Elon Musk's toes, but a much darker side has emerged. As well as the harrowing story of a teen who took his own life after being blackmailed with AI nudes, there was the recent drama involving Musk's Grok and a probe that revealed it had made inappropriate images of children as young as 11.

As well as Ashley St. Clair threatening to sue, three Tennessee teenagers have launched an actual lawsuit against Grok.

Advert

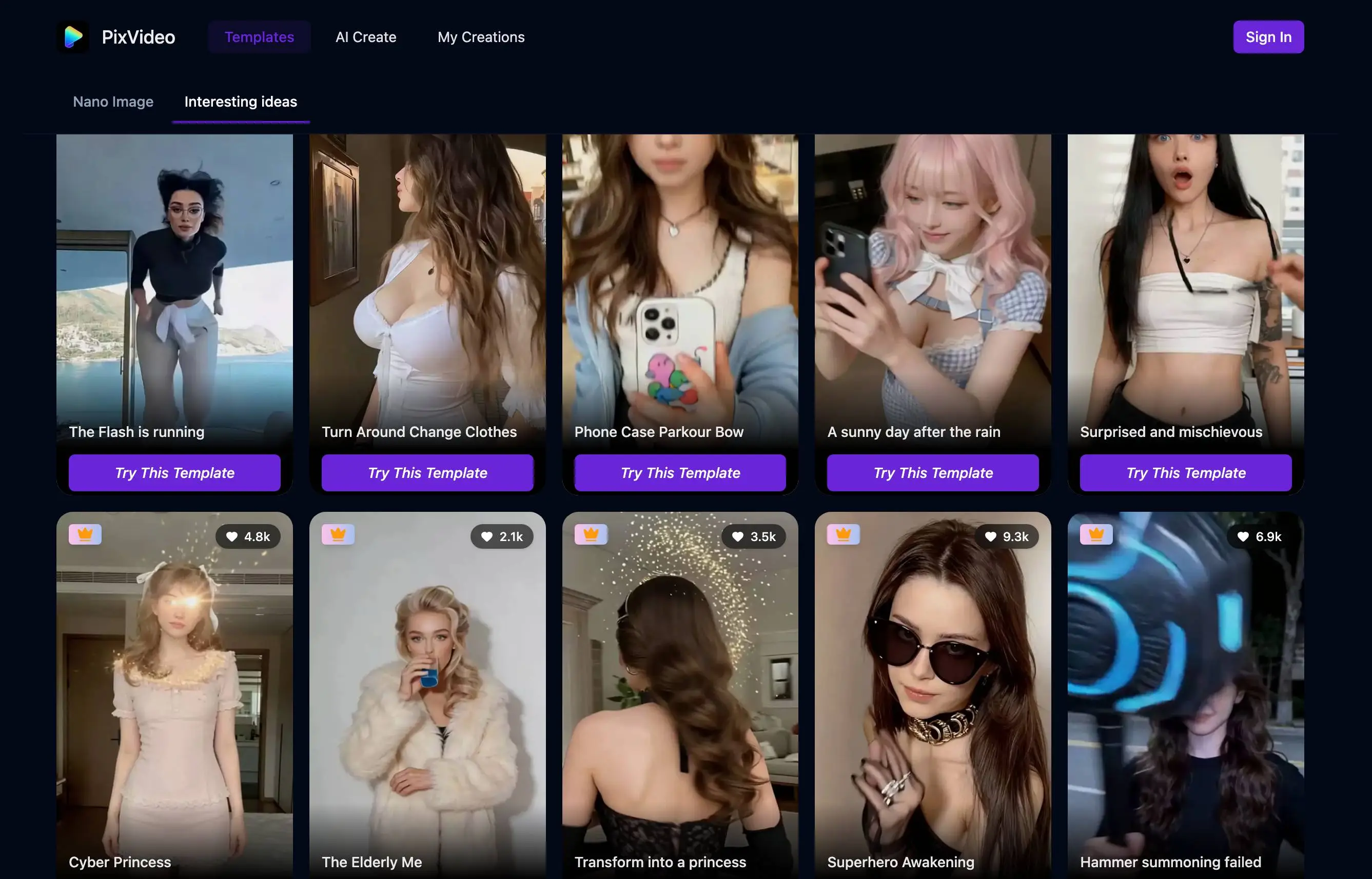

This could be the start of an uncomfortable trend, as now, The Independent reports on a complaint that's been upheld against PixVideo. The outlet explains how a YouTube ad promoting PixVideo – AI Video Maker appeared in January 2026 and showed off supposed 'before' and 'after' images of a young blond woman. With a red mark that suggested you could erase her clothing, text across the image included a heart-eye emoji and simply said: "Erase anything".

Eight people complained to the Advertising Standards Authority, claiming the ad objectified and sexualized women. They also challenged whether it could be viewed as "irresponsible, offensive and harmful."

Trading as PixVideo - AI Video Maker, Saeta Tech Ltd said that while it understood the ASA’s concerns about how the advert could be interpreted, its terms and conditions prevent the creation of nude or sexually explicit content. Automated AI detection is said to flag this kind of imagery, reiterating that PixVideo isn't designed to remove clothing or create nude imagery.

Admitting that the ad didn't convey this message, it also accepted that the visuals shown implied the opposite.

The ASA's ruling refers to a "failure in creative execution and oversight."

Recognizing that the advert could cause serious offense under the UK Code of Non-broadcast Advertising and Direct & Promotional Marketing (CAP Code), Saeta Tech Ltd also agreed that it could be misconstrued as objectifying women or that the app could digitally expose someone without consent.

As well as removing the ad, it's said that Saeta Tech Ltd has suspended advertising on all media platforms while a 'comprehensive internal audit' is conducted. More than just taking down the ads, the company vowed to upgrade the review and approval process for further campaigns. This apparently includes "stricter creative guidelines, enhanced internal review, and mandatory compliance checks to ensure future ads did not imply, encourage or normalise harmful, sexualised or non-consensual portrayals."

Ultimately, the ASA ruled that the advert breached CAP Code (Edition 12) rules 1.3 (Social responsibility), 4.1, and 4.9 (Harm and offence). Although the authority stated that users weren't actually able to make explicit content, the implication that women could be reduced to a sexual object was still there. The ASA added: "Because the ad implied that viewers could use an app to remove a woman’s clothing, we considered it condoned digitally altering and exposing women’s bodies without their consent."

Despite welcoming Saeta Tech Ltd's willingness to take the ad down, it was still considered a "harmful gender stereotype and was likely to cause serious offence."