Warning: This article contains discussion of suicide which some readers may find distressing.

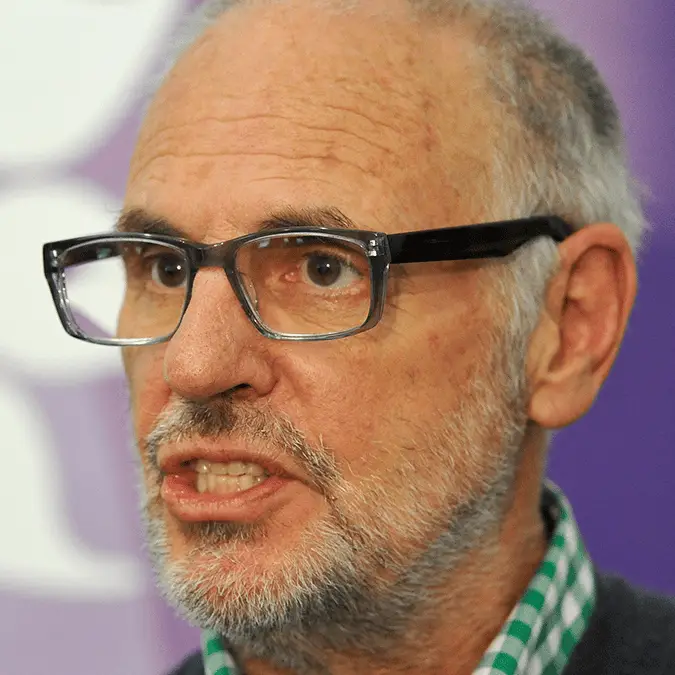

Sarco 'suicide' pod inventor, Philip Nitschke, faces further controversy over the device and whether it will continue to be used following the death of a 64-year-old woman from the USA.

In September 2024, several people were arrested in Switzerland in connection with the woman's death, including Florian Willet – the CEO of right to die organisation "The Last Resort". An equally tragic turn of events saw Willet take his own life, with Nitschke confirming this was done with the help of a ‘specialized organization'.

Invented by the euthanasia campaigner in 2017, the Sarco pod is supposed to peacefully aid someone in taking their own life as an expansion of the hypoxic death method.

Advert

Although the Sarco pod has only been used once, Nitschke has said there are plans for it to continue operating, with artificial intelligence potentially deciding whether someone is fit to do so.

The current method involves people being asked three specific questions to determine whether they know the consequences of using the Sarco pod.

Speaking to Euronews Next, Nitschke thinks that AI could soon replace psychiatrists and decide who has the 'mental capacity' to end their own life. Calling out the current system, he said: "We don’t think doctors should be running around giving you permission or not to die. It should be your decision if you’re of sound mind."

As the outlet reminds us, this has sparked a fierce debate on whether something like AI can be trusted with a decision as monumental as assisted dying. There's been a run of stories that have linked AI chatbots, mental health, and users taking their own lives.

The proposal has reignited conversations about assisted dying and whether AI can be trusted with decisions as significant as life and death. In November 2025, seven lawsuits were filed against OpenAI and its ChatGPT chatbot, with four referring to suicide and the three mentioning 'other' mental health crises.

We've covered numerous concerning final chat logs, with AI critics saying it still can’t flag when someone requires further assistance.

Nitschke has been dealing with assisted dying for decades, notably making history when he was the first doctor to legally administer a voluntary lethal injection in 1996.

As technology has evolved and the subject matter remains something of a taboo, Nitschke's position has only hardened as he reiterates his belief that "the end of one’s life by oneself is a human right."

Still, he's generated more controversy by speaking out on using AI as a potential judge of someone's mental capacity.

Standing by his vision for the future, Nitschke said: "I’ve seen plenty of cases where the same patient, seeing three different psychiatrists, gets four different answers.

"There is a real question about what this assessment of this nebulous quality actually is."

He sees an AI system that would use a conversational avatar to judge your mental state: "You sit there and talk about the issues that the avatar wants to talk to you about. And the avatar will then decide whether or not it thinks you’ve got capacity."

If the AI thinks you're of sound mind, the suicide pod would be activated, and you'd have 24 hours to decide whether to go ahead.

While he claims early versions of this software are already working, they're yet to be independently validated. In the meantime, he sees AI assessments working alongside traditional psychiatric reviews: "Whether it’s as good as a psychiatrist, whether it’s got any biases built into it – we know AI assessments have involved bias. We can do what we can to eliminate that."

Nitschke concluded by saying he hasn't found a single psychiatrist who agrees with his plans, with critics highlighting risks like emotional distress being mistaken as informed consent, as well as concerns about how transparent, accountable, and ethical it is to place decisions like this onto an algorithm.